The real cost of random I/O

The random_page_cost was introduced

~25 years ago, and since the very beginning it’s set to 4.0 by default.

The storage changed a lot since then, and so did the Postgres code.

It’s likely the default does not quite match the reality. But what

value should you use instead? Flash storage is much better at handling

random I/O, so maybe you should reduce the default? Some places go as

far as recommending setting it to 1.0, same as seq_page_cost. Is this

intuition right?

Let’s do an experiment to “measure” the cost of reading a random page, compared to a sequential read. We can do it like this:

generate a table, with a random column and an index

read the table through a sequential scan

read the table through an index scan

calculate time for a sequential and a random page read

Shared buffers are left at default value (128MB). Table is an order of

magnitude larger than shared buffers, but still relatively small so

that the runs are fairly short. The effective_cache_size is set to

128MB to match shared buffers, and we enable direct I/O, to eliminate

the effect of page cache.

These choices make caching effects much easier to predict. Estimates of

page hits and misses are going to be very accurate (see

cost_index

and index_pages_fetched).

Which is what we need. It does not make much sense to evaluate costing

if the inputs are way off. Garbage in, garbage out.

This gives you a ~4.4GB table:

CREATE TABLE t (a int, b int) WITH (fillfactor=10);

INSERT INTO t SELECT i, i FROM generate_series(1, 10_000_000) s(i) ORDER BY random();

CREATE INDEX ON t (a);

VACUUM ANALYZE t;

We can run a sequential scan

EXPLAIN (ANALYZE, TIMING OFF) SELECT * FROM t;

QUERY PLAN

-----------------------------------------------------------------------------------------

Seq Scan on t (cost=0.00..554546.12 rows=10000012 width=8)

(actual rows=10000000.00 loops=1)

Buffers: shared read=454546

Planning:

Buffers: shared hit=38 read=11

Planning Time: 0.597 ms

Execution Time: 708.420 ms

(6 rows)

and also an index scan (which does almost perfect random I/O):

SET enable_seqscan = off;

SET effective_cache_size = '128MB';

EXPLAIN (ANALYZE, TIMING OFF) SELECT * FROM t ORDER BY a;

QUERY PLAN

-----------------------------------------------------------------------------------------

Index Scan using t_a_idx on t (cost=0.43..38900782.85 rows=10000012 width=8)

(actual rows=10000000.00 loops=1)

Index Searches: 1

Buffers: shared hit=342789 read=9684524

Planning:

Buffers: shared hit=44 read=18

Planning Time: 0.957 ms

Execution Time: 392976.430 ms

(7 rows)

The sequential scan does 454546 sequential reads, while the index scan does about 9660336 random reads (pages are visited repeatedly, once per tuple). This allows us to calculate the time for a sequential and a random read of one page:

seq_page_time = (708.420 ms / 454546) = 1.559 us

random_page_time = (392976.430 ms / 10000000) = 39.298 us

We often say that cost is not time, and that the mapping is complex.

But in this trivial case we can calculate random_page_cost simply by

dividing these two timings

random_page_cost = random_page_time / seq_page_time = 39.298 / 1.559 = 25.2

That’s clearly significantly higher than the default value 4.0.

This may be surprising, and it directly contradicts the recommendation

to lower the random_page_cost value on SSDs.

This calculation is a bit rough, as it ignores other parts included in

the cost calculation (per cost_index). We can “undo” those components

by subtracting per-tuple cpu_tuple_cost, cpu_index_tuple_cost and

cpu_operator_cost. And then calculate the random_page_cost so that

the ratio of total costs matches the timings.

The CPU costs are much smaller than the I/O costs, so the result won’t be much different. There’s also the question how “accurate” are those other per-tuple costs. We’ll simply ignore this.

Experiment

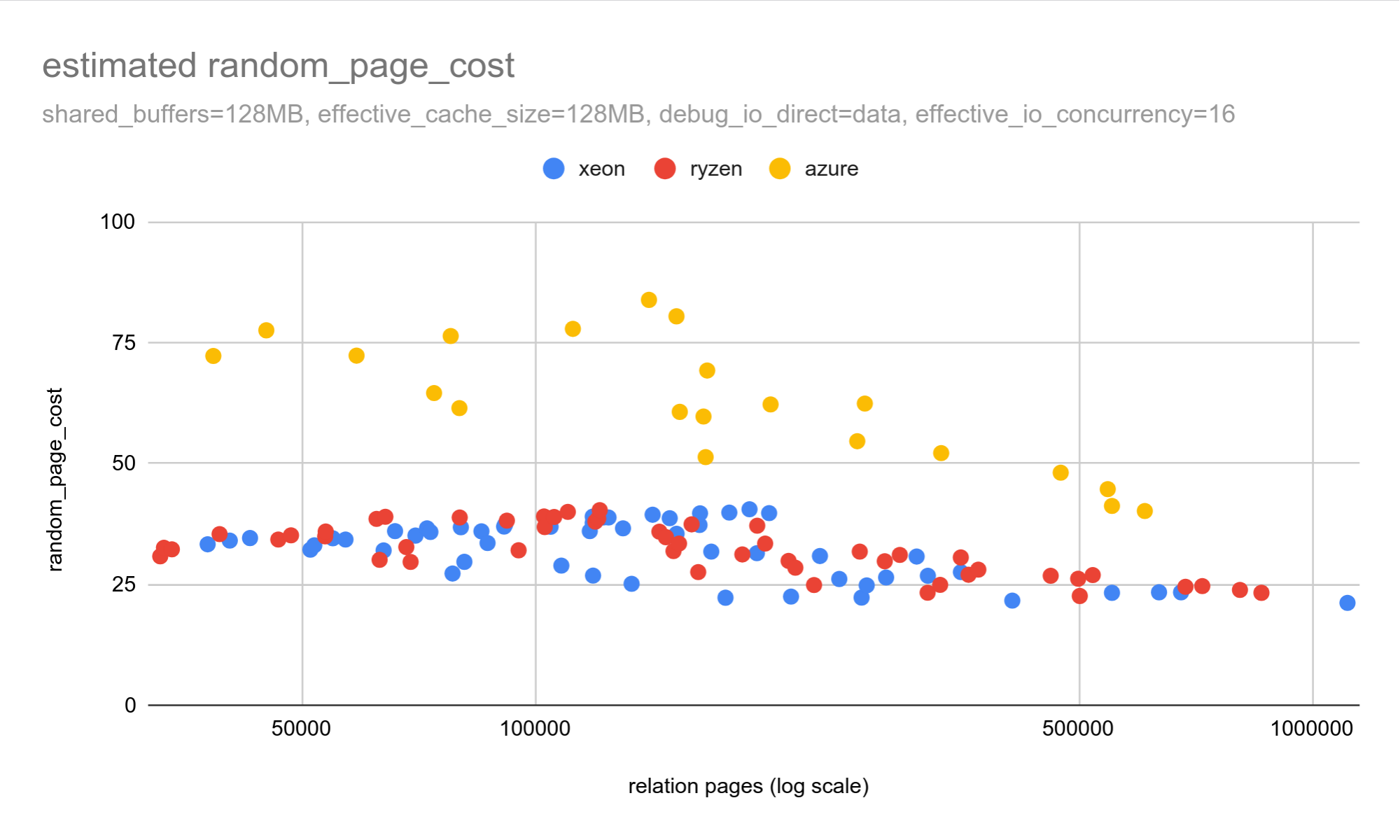

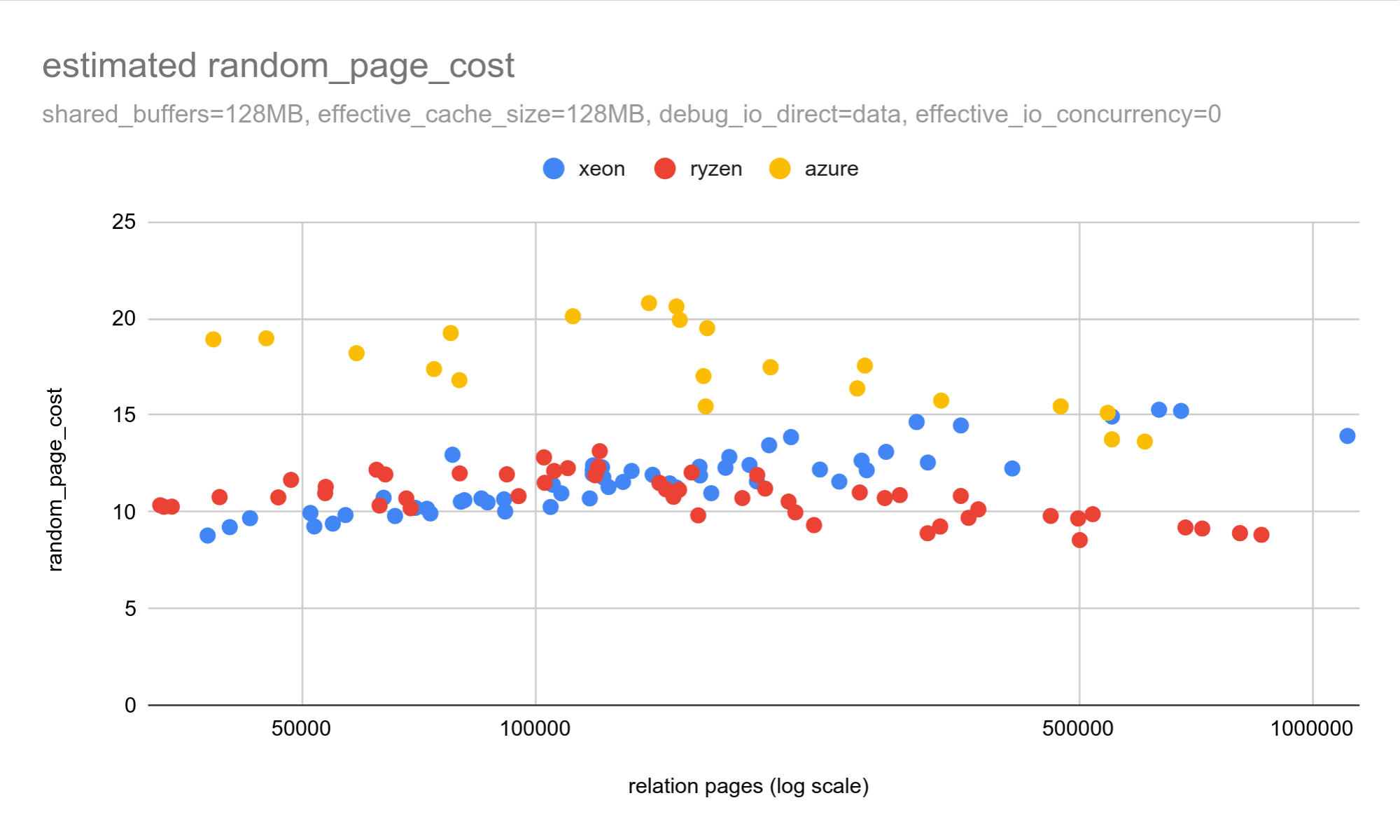

I repeated this exercise on three systems, using tables with 5-25M rows and random fillfactor. Two systems had local SSDs (ryzen, xeon) and one on Azure with remote SSD.

Here is a chart showing the estimated random_page_cost values:

On local SSDs, estimated random_page_cost values are ~25-35. That

matches the earlier result, and it’s almost an order of magnitude

higher than the default value. With remote SSDs, the difference is even

larger.

What does this mean in practice? The cost parameters are used for

query planning, and if the random_page_cost value is an order of

magnitude off, will it affect the plans? It would be weird if it did

not, of course.

Let’s consider sequential and index scans, the two fundamental plans doing sequential and random I/O. The cost and duration scale linearly with the number of pages accessed.

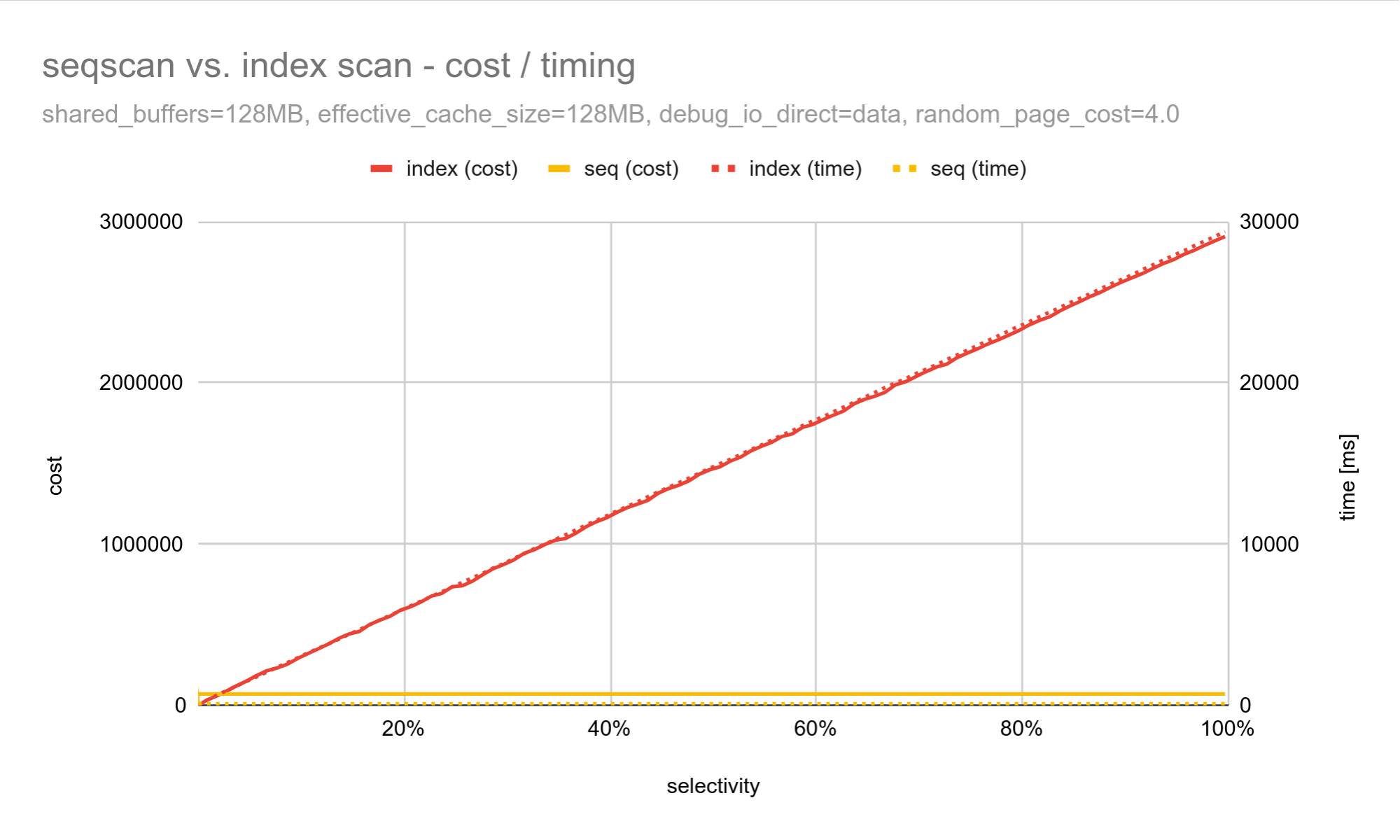

Here is a query returning a variable part of a table:

SELECT * FROM t WHERE a BETWEEN $1 AND $2;

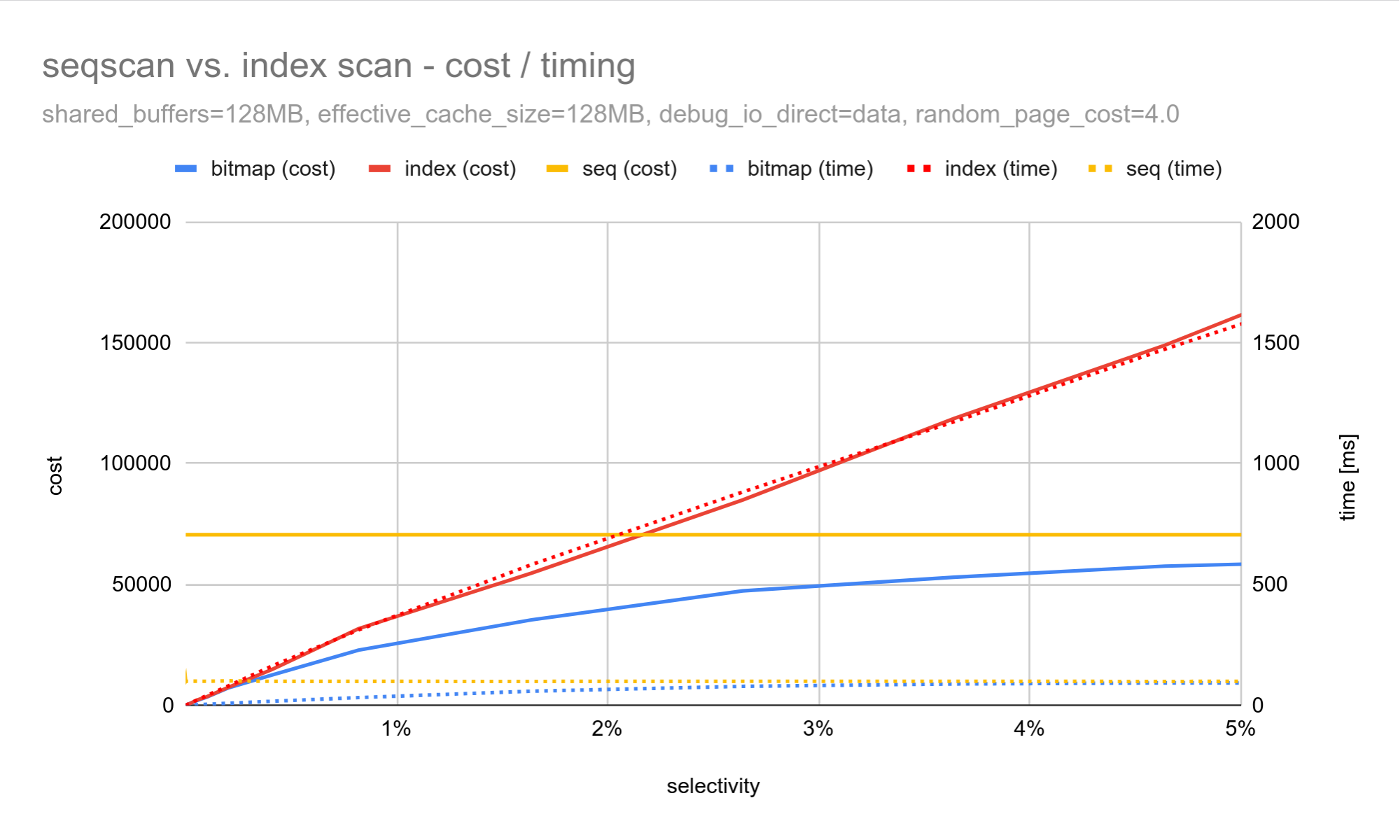

If we plot the cost and duration for different fractions (matching the

BETWEEN clause) for a table with 10M rows, we get a chart like this:

The x-axis shows the fraction of rows matching the condition, the left and right y-axis show costs and duration.

Due to the extreme index scan cost/duration values it’s hard to see what’s happening in the “interesting” part close to 1%, where the costs (and durations) intersect.

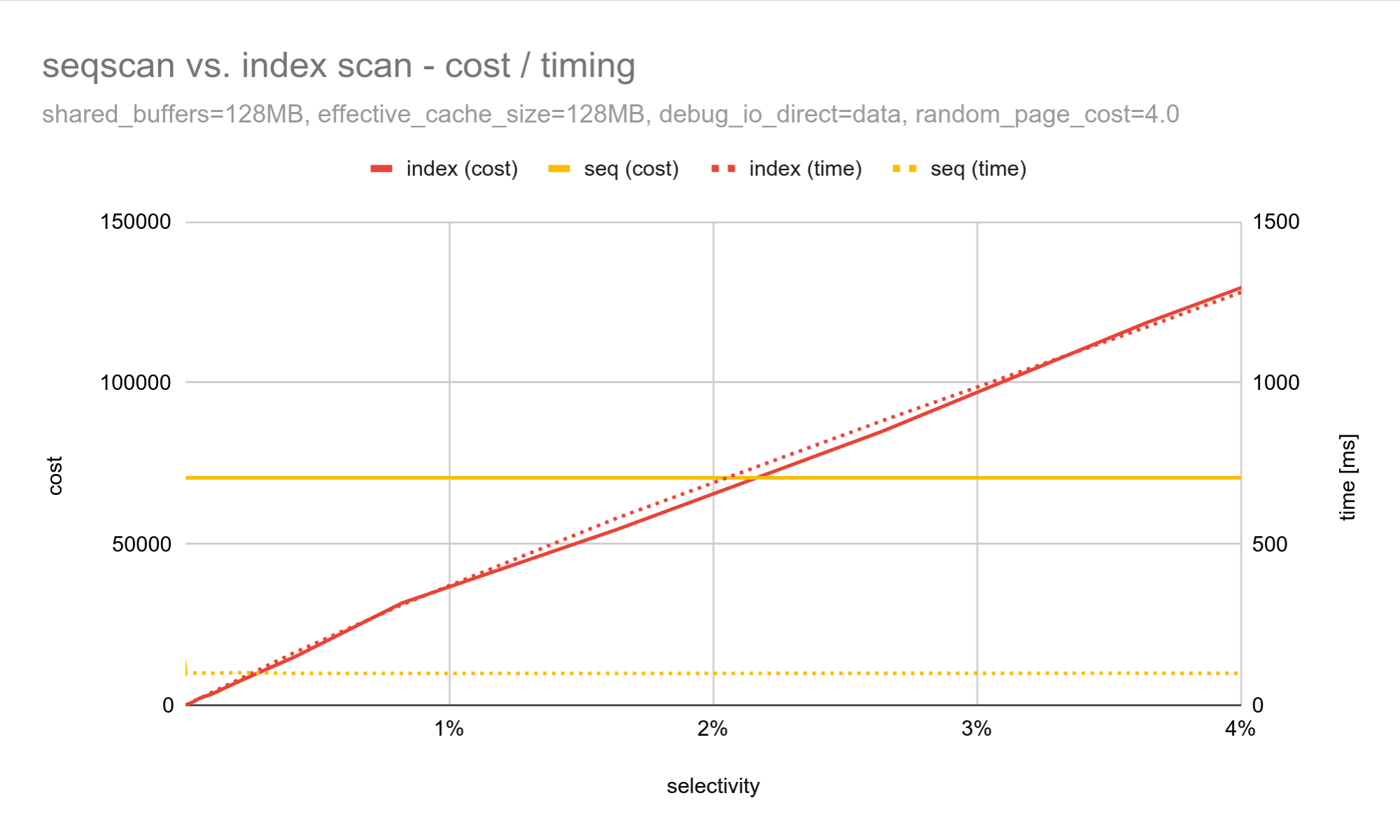

Here’s a chart zooming into that part:

It’s not an accident that the index scan cost and duration have the same

slope. That’s a direct consequence of the values scaling linearly with

the number of pages accessed by the scan. Assuming no cache hits, it

will read one page per tuple, and each page costs random_page_cost.

For a sequential scan the cost and duration are constant, as the scan has to read all pages, no matter how many rows match the condition.

What matters is where the costs (and durations) intersect.

The costs (solid lines) intersect at ~2.2%, where the planner switches to a sequential scan. But the durations (dashed lines) intersect at 0.2%, where the sequential scan becomes faster.

This means the planner will pick the “wrong” plan for selectivities between 0.2% and 2.2%. Close to the 2.2% selectivity the queries may take up to ~10x longer.

The costing is never going to be perfect - it’s a simplified model based on estimates, after all. But the better the costs and durations align, the better. The further apart those selectivities are, the more often we will end up with the wrong plan.

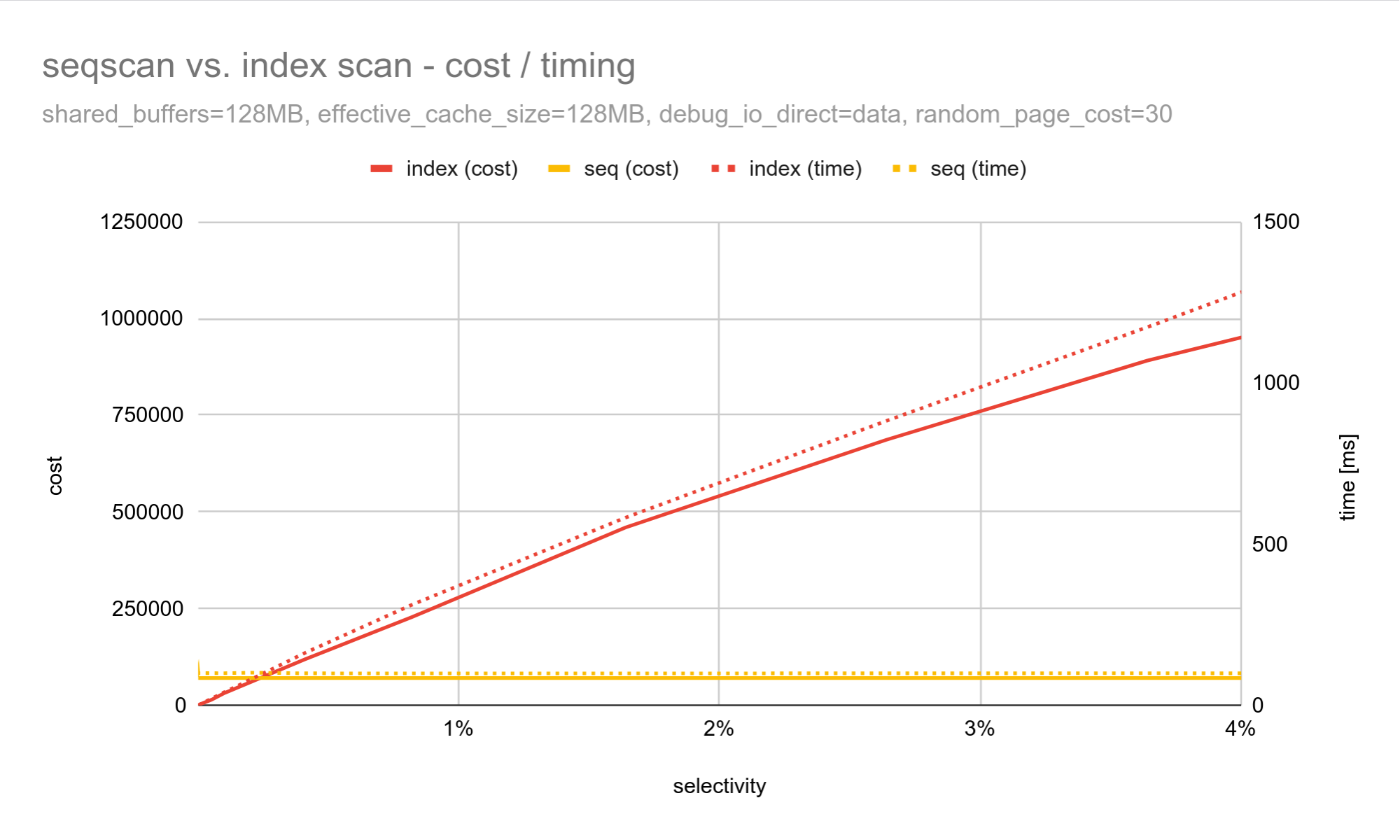

What would the chart look like for random_page_cost = 30.0, the value

suggested by our experiments (for local SSDs)?

The costs and durations align almost perfectly!

Note the range of the left y-axis changed, to handle the ~8x higher index scan costs. This “pushed down” the sequential scan cost, and the cost intersection now aligns with 0.2% almost perfectly.

Bitmap Scans

The 0.2% to 2.2% selectivity “gap” is quite wide, so it may seem the risk of picking an inefficient plan is fairly high. In practice it’s not that bad, thanks to bitmap scans - a variant of index scans suitable for queries accessing many rows.

Here’s a chart with all three scan types (for random_page_cost = 4.0):

The bitmap scan cost is consistently below both the sequential and index scans, except for a tiny area close to 0% where the index scan wins. The duration is consistently lower than the index scan too. Bitmap scans make the access more sequential, and also support I/O prefetching (issuing I/O in advance).

Cost vs. prefetching

Speaking of prefetching, that’s a very interesting topic. It can

significantly improve performance, but the cost model does not actually

consider that at all. Set effective_io_concurrency = 0 to disable

prefetching, the duration may increase 10x but the cost remains exactly

the same.

AFAIK this is mostly due to prefetching being added incrementally over time, to some selected plans (e.g. bitmap scans). It wasn’t part of the original cost model. It’s also quite difficult to predict the impact of prefetching (how much it helps a given plan).

Assuming it does not help could be seen as a “defensive” approach. Prefetching itself is relatively safe - it usually massively helps, or it may cause a small regression. Changing a cost brings the possibility of picking a different plan, and that’s much riskier. Plan flips are a relatively common performance issue, unfortunately.

What’s worse, this does not affect all scan types equally. Bitmap scans supported prefetching the longest. Sequential scans now do prefetching thanks to AIO (before that it relied on the kernel read-ahead). You can see some of the effects in my post about AIO.

Index scans don’t do any prefetching yet, but there’s a good chance it’ll change in Postgres 19.

Adjusting effective_io_concurrency affects duration, not costs. It

changes where the durations intersect, without changing the costs. It’s

the opposite effect than for random_page_cost, which changes the cost

and not duration.

But that means the two GUCs work against each other! Changing the

prefetching (enabling/disabling) changes the “correct”

random_page_cost value.

Here’s a chart showing the random_page_cost with disabled prefetching:

It’s the same experiment as the first chart in this post, but if you compare the results the values dropped from ~30 to ~10. Disabling prefetching made random I/O ~3x cheaper!

That may seem bizarre. Prefetching is meant to make random I/O cheaper! But index scans do not support prefetching yet, while sequential scans do. So disabling prefetching makes seqscans slower, while not changing index scans at all. Sequential reads get slower, we see it as random reads getting more expensive.

Of course, prefetching does not make individual random I/O any cheaper. It just makes it look cheaper because the executor does not need to wait that long for the data. It’s similar to how parallelism lowers query costs by doing work concurrently.

We’ll probably need to do something about this, and start considering prefetching in costing. If we have two paths, doing the same amount of I/O, but one can do prefetching, it should look “cheaper”. Estimating the effects of prefetching is tricky, though.

Could lowering random_page_cost be fine?

We know the “true cost” of random I/O is much higher than 4.0, the

default value. On fast local SSDs it’s ~30, on cloud storage it’s even

higher (latency clearly matters). Maybe don’t lower random_page_cost

simply because “SSDs are fast for random I/O”?

Does that mean it’s always wrong, though? Certainly not. Some of the

people recommending lower random_page_cost values are certainly very

experienced and respectable engineers and admins. They must have a good

justification for the recommendation. What could it be?

I can think of a couple reasons.

Random but focused

We’ve assumed the data is not cached, triggering a lot of random I/O. But many real-world systems don’t behave like that. The data set may be huge, but the “active set” (accessed by queries) is much smaller, and comfortably fits into RAM. That means most random reads won’t do physical I/O at all, and will be very cheap.

In fact, doing sequential reads could be terrible. Reading the whole table would require physical I/O, especially if the table is huge and does not fit into RAM. It could even push some of the hot data from memory. It can be better to prefer random I/O against a small cached data set, rather than uncached sequential I/O.

As pointed out in the pgsql-hackers thread, OLTP systems tend to have a very high cache ratios. There is also an asymmetry when choosing whether to stick to the index scan or switch to a sequential scan due to a small increase of selectivity. If we switch to a seqscan too early, we suddenly have to pay the full price. With an index scan the cost increases linearly, and gradual degradation is much better than a performance cliff.

Our ability to estimate this during planning is very limited. The

effective_cache_size hint gives us a very rough idea how much data

fits into cache, but we don’t know if a particular query touches only

cached data. Lowering random_page_cost is an indirect way to tell

the optimizer we expect this to happen.

Planning in isolation

Another interesting detail is that we plan queries in isolation, as if there were no other queries. Picking a sequential scan may be a win for our query. But if it pushes data needed by other queries from the cache, it may be a terrible loss.

Plans doing random I/O (index scans) are often “more focused”, and need to access a much smaller subset of data. Which reduces the cache pressure and makes it more likely the active set will fit into memory.

Wrong estimates

The final reason I can think of is incorrect estimates. The examples in this post used a very simple data set (uniform distribution). That allows near perfect estimates in the optimizer. Realistic data sets are much more complicated, with correlations etc. Then estimates can be quite terrible, pushing the optimizer to pick the “wrong” plan.

One way to mitigate this is adjusting cost parameters (like for example

random_page_cost) to “wrong” values that happen to pick the right

query plan. However, it’s a blunt instrument, and it’s usually specific

to individual data sets and/or queries (not all estimates will be off).

Conclusions

Is lowering random_page_cost a good or a bad idea? Judging solely by

the actual I/O costs, random I/O is much more expensive than the

default. If the change is justified only by “SSDs are fast” then it’s

misguided.

In practice it may be the right thing to do, for all the reasons

mentioned in the last section. If you’re planning to tune this GUC

strongly recommend monitoring the performance, and using it to evaluate

the effect of changing the random_page_cost value. Or any other

parameter, for that matter.

You should have some monitoring - either a dedicated monitoring system

tracking query latencies, or at least an ad hoc pg_stat_statements

snapshots.

There’s plenty of room for improvement and patches. If you read through

the thread

I started about changing the random_page_cost default, there are at

least these ideas:

separating non-IO costs from

random_page_cost- Therandom_page_costparameter is used for costing various operations involving random I/O, and accounts for some additional overheads too (e.g. the effects of I/O combining, some CPU costs, etc.). It seems reasonable to separate those into dedicated GUC parameters.improving statistics of cached data Our ability to estimate which data (or at least what fraction) is cached is very limited. We have

effective_cache_size, but that’s not enough. It’s used to estimate if data accessed by a query was cached by the same query.considering prefetching in costing As of now, the costing entirely ignores prefetching. Yet prefetching can have massive impact on performance, often with an improvement of an order of magnitude (or more). That seems significant enough to be reflected in the cost.

Each of these could be a nice topic for research and a patch proposal.